.webp)

.webp)

.webp)

.webp)

Using ChatGPT in a law firm isn't some futuristic idea anymore—it's happening right now, reshaping how personal injury lawyers get work done. Attorneys are already using it to draft documents, summarize records, and boost firm efficiency. The conversation has shifted from if we should use it to how we can use it safely and effectively.

The legal world is past the point of skepticism. We're now in the middle of active AI integration. Tools like ChatGPT aren't just novelties; they're becoming a standard part of the toolkit for associates and paralegals, especially in the fast-paced world of personal injury law. This rapid adoption is fueled by a real, pressing need to be more efficient in a field that's more competitive than ever.

But this new tech brings some serious challenges. Lawyers have to walk a fine ethical line, balancing the productivity gains against their core duties to clients.

A few key questions immediately come to mind:

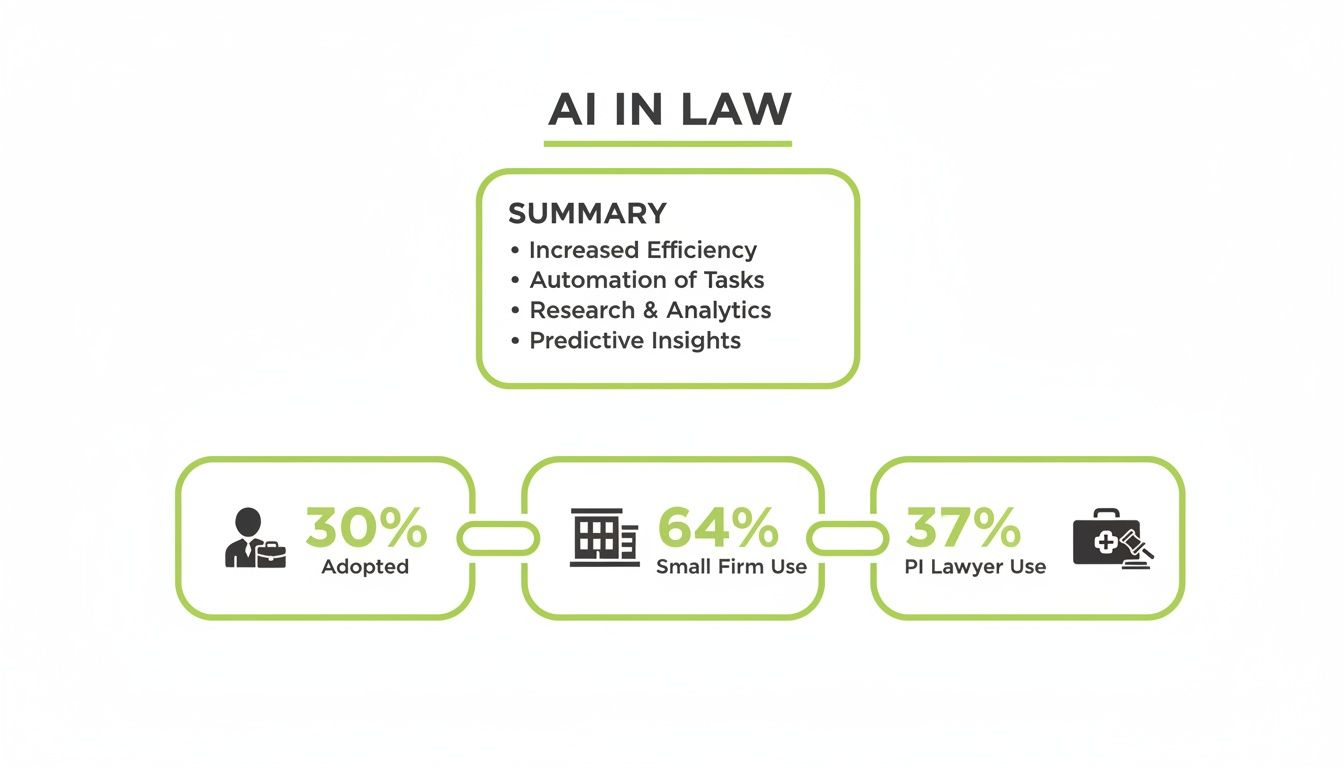

Interestingly, it’s not the giant, tech-heavy firms that are spearheading this shift. It's the solo practitioners and small law firms who are jumping in first. For example, lawyers can now easily record meetings and transcribe them with AI, turning what used to be a tedious manual task into a simple, automated process.

The numbers back this up. According to the 2026 Thomas Reuters' Survey Report, generative AI rose to 26% in 2025, with 45% of firms either using it or planning to make it central to their workflow within a year. The trend is most obvious in smaller practices. The report found that 45% of firms are either using it or planning to make it central to their workflow with a year.

This infographic breaks down some of the key adoption stats.

The data is clear: small firms and PI lawyers aren't sitting on the sidelines. They're grabbing tools that help them level the playing field.

This isn't about some future trend—it's about a tool that is already being used in your practice, whether you have a formal policy for it or not. Proactively addressing the risks is no longer optional.

Understanding this context is key. It helps frame the conversation not just around how to use a tool like ChatGPT, but also when to recognize its limits and switch to a specialized, secure system built for the unique demands of personal injury law.

Choosing the right tool is critical. While general-purpose AI like ChatGPT is powerful, it wasn't built with the security, confidentiality, or specific workflows of a personal injury firm in mind. That's where specialized platforms come in.

Here’s a quick breakdown of the key differences:

Ultimately, while ChatGPT can be a great starting point for non-sensitive tasks, the risks associated with handling client information are significant. For core legal work involving confidential data, a purpose-built AI operating system designed for personal injury law is the safer, more effective choice.

Drafting a compelling demand letter is part art, part science. It takes a solid grasp of the facts, a story that connects, and the right legal tone to show you mean business. This is where ChatGPT can be a game-changer for lawyers, acting like a high-powered paralegal to get you past writer's block and build a strong first draft.

The secret is to think beyond simple, one-off questions. You need to treat it like a conversation, guiding the AI from a broad story to a detailed, legally sound argument. You start with the foundational facts and then layer in refinements to make the letter hit harder.

This approach is quickly becoming standard practice. A 2025 AffiniPay/American Bar Association study found that 31% of legal professionals use AI personally for work, while only 21% of firms have officially implemented it.

First things first, you need to create a "master prompt." This is where you feed ChatGPT all the essential, anonymized facts of the case. The goal here isn't to get a finished letter; it's to get a structured, logical narrative that will serve as the skeleton for your demand. Think of it as organizing the story before you start writing.

For a classic rear-end collision case, your foundational prompt should be packed with specific, anonymized details laid out for total clarity.

Here’s what a good master prompt looks like in action:

Prompt Example: Foundational Narrative

"Act as a paralegal for a personal injury law firm. Your task is to draft the initial 'Statement of Facts' section for a demand letter based on the following anonymized details. Structure the narrative chronologically, starting with the incident and ending with the current status of the client's recovery.

A prompt this detailed gives ChatGPT everything it needs to generate a coherent, fact-based narrative—the very core of your letter.

Once that initial narrative is in place, the real magic begins. Now you can use a series of shorter, more targeted prompts to shape the tone, add legal weight, and weave in specific details.

Think of these follow-up prompts as a supervising attorney guiding a junior associate. You can instruct the AI to:

The point isn't to take the AI's first output and run with it. It's to use it as a dynamic tool to quickly test different angles, tones, and structures, saving you hours of manual redrafting.

This multi-prompt workflow turns ChatGPT from a simple text generator into a strategic partner. This same logic applies to other firm communications, too. For example, you can learn to utilize ChatGPT to write emails with similar efficiency.

Of course, the final, non-negotiable step is a thorough review by a qualified attorney. Every fact, legal citation, and strategic point must be verified to ensure the letter perfectly aligns with your firm’s strategy. For a deeper look at this entire workflow, check out our guide on how to use AI to draft effective demand letters.

Personal injury cases are built on mountains of medical records. A paralegal might spend days—or even weeks—sifting through hundreds of pages of physician notes, diagnostic reports, and billing statements just to build a coherent timeline. This is where ChatGPT can radically change your workflow, turning a daunting manual task into a manageable, AI-assisted process.

The goal isn't to let AI do the thinking for you. It's about using it as a powerful data extraction tool to create the first draft of a medical chronology or summary, which a human expert then meticulously verifies.

The biggest headache with medical records? They're completely unstructured. Key information is scattered across dozens of different formats and documents. ChatGPT is surprisingly good at identifying and organizing this scattered data, but only if you give it clear, specific instructions.

Your primary tool here is a well-designed prompt that acts as a template for extraction. This prompt tells the AI exactly what information to look for and how to format it. I've found that asking for a structured table is the best approach, since it's easy to copy directly into a spreadsheet for review.

Here’s a practical prompt you can adapt for your own cases:

Prompt Example: Medical Record Extraction

"Act as a paralegal specializing in personal injury law. Analyze the following anonymized medical record excerpt and extract the specified information. Present the output in a markdown table with these columns: Date of Service, Healthcare Provider, Location, Type of Visit/Treatment, Key Diagnoses, and Billing Amount.

[Paste the anonymized text from the medical record here]"

This structured approach forces the AI to focus on specific data points, reducing the chance of it generating a long, unhelpful narrative.

Let's be realistic: you can't just upload a 200-page PDF into ChatGPT and expect a perfect summary. The tool has context window limitations. To handle large documents, you have to break them down into manageable pieces—a technique we call "chunking."

The workflow is straightforward:

This methodical approach ensures you don't overwhelm the AI, leading to more accurate, granular results. It’s a bit more hands-on, but it's still far faster than reading and typing every single entry manually.

The real power of this workflow is its scalability. Once you have a reliable prompt and process, you can delegate the "chunking and prompting" to a legal assistant, freeing up skilled paralegals for the critical verification stage.

Beyond building timelines, ChatGPT is also incredibly effective at creating concise summaries of specific medical encounters. This is a huge time-saver when you need to quickly understand the key takeaways from a long physician's note or an operative report before a deposition or client meeting.

For this task, your prompt should focus on narrative clarity rather than structured data.

Prompt Example: Visit Summary

"Please provide a concise, one-paragraph summary of the following anonymized doctor's visit note. Focus on the patient's reported symptoms, the doctor's physical examination findings, the official diagnosis, and the recommended treatment plan. Write in clear, plain language."

This gives you a quick-glance overview that can be invaluable when preparing other case documents. For those interested in the broader applications of this technology, our article exploring the benefits of AI in case file summarization offers more insights.

I cannot overstate this point: every piece of information extracted by ChatGPT must be verified by a human. AI models can "hallucinate" or misinterpret data, especially from scanned documents with complex formatting. Research has shown that even advanced models can generate erroneous information. For example, a 2023 study by Stanford researchers found that large language models, including GPT-4, often "hallucinated" answers to legal questions up to 82% of the time.

Think of the AI-generated chronology as the first draft, not the final product. A skilled paralegal must perform a line-by-line review, cross-referencing each entry in the spreadsheet with the original source document.

Using ChatGPT in this context isn't about replacing human expertise; it's about augmenting it. The AI handles the initial, time-consuming data entry, allowing your team to focus their valuable time on the high-level tasks of verification, analysis, and strategy. This partnership between human and machine is what unlocks true efficiency.

Okay, let's switch gears from how to use AI to how to use it safely. This is a critical conversation for any law firm. The efficiency you can get from tools like ChatGPT is real, but it comes with some serious ethical and compliance baggage. Every single prompt you type into a public AI tool can expose your firm and your clients to massive risks—from confidentiality breaches to professional sanctions.

Using these platforms isn't just about working faster. It's about upholding your duty of care in a totally new environment. The first step is to really understand these risks so you can build a smart, protective AI policy for your practice.

The most immediate and talked-about danger with tools like ChatGPT is "AI hallucinations." This is when the AI generates plausible-sounding but completely fabricated information. Think non-existent case law, made-up statutes, and phantom legal precedents. It happens more often than you'd think, even with the most advanced models.

The infamous Mata v. Avianca, 22-cv-1461 (PKC), (S.D.N.Y. June 22, 2023) case is the perfect cautionary tale. Two New York lawyers were sanctioned after submitting a legal brief packed with fake case citations straight from ChatGPT. They just trusted the AI's output without doing their own homework, which led to a whole lot of professional embarrassment and court-ordered penalties.

This case underscores a fundamental rule for using AI in law: the attorney is always responsible for the final work product. AI is a tool. It's not a substitute for your professional judgment and diligent verification.

You absolutely have to verify every single piece of AI-generated content. Treat its output as a rough first draft that needs a rigorous human review. It’s the only way to avoid the risk of submitting flawed, inaccurate, or just plain false information to a court or opposing counsel.

ChatGPT use in the workplace is exploding, and it's creating a huge "shadow IT" problem for law firms. Data from Pew Research Center's 2023 survey shows that 28% of employed U.S. adults are now using it for work. And for those with postgraduate degrees—a group full of lawyers—that number shoots up to 45%.

What does that mean for you? Your associates are almost certainly using these tools right now, probably without any formal approval and completely bypassing your firm’s security protocols. You can learn more about the scale of this massive AI problem for law firms.

This unauthorized use creates some serious vulnerabilities. Every time an employee pastes sensitive case details or client information into a public AI platform, they're risking a data breach.

To get a handle on this, you need to create and enforce a clear, formal AI policy. This policy should be built around a few core principles.

For personal injury firms, the stakes are even higher because you're constantly dealing with sensitive medical records. Understanding your obligations here is non-negotiable, which is why we put together a detailed guide on navigating HIPAA compliance with AI. A formal policy isn’t just good practice—it's your best defense against ethical breaches and data security disasters.

While ChatGPT is a powerful tool for initial drafts and brainstorming, every growing personal injury firm will eventually hit its limits. It's a fantastic starting point, but there are clear signs that it's time to switch to a specialized legal AI platform. Recognizing these moments is key to scaling your practice responsibly while protecting your firm and your clients.

This isn't about ditching AI. It’s about graduating to a tool built for the specific, high-stakes demands of legal work. The manual, one-off workflows that are fine for occasional use in ChatGPT quickly become inefficient and risky as your case volume and team size grow.

The first and most critical trigger is when you start handling Protected Health Information (PHI) at scale. Personal injury law is built on medical records, and let's be clear: the free, public version of ChatGPT is not HIPAA compliant. Every time a paralegal pastes even a partially anonymized medical note into that public interface, you're flirting with a serious compliance violation.

A single data breach can lead to massive fines—up to $50,000 per violation, according to the U.S. Department of Health and Human Services—and do irreparable damage to your firm’s reputation.

Specialized platforms like ProPlaintiff.ai operate within a secure, closed-loop environment designed for legal work. This is a totally different ballgame.

When the risk of a HIPAA violation outweighs the convenience of a free tool, it is unequivocally time to upgrade.

Another obvious sign you've outgrown ChatGPT is when your case volume makes one-off prompting a major bottleneck. Imagine your firm takes on a multi-plaintiff case or just gets a surge of new clients. Suddenly, the task of summarizing dozens of medical files goes from manageable to completely overwhelming.

Sure, the "chunking" method—feeding small bits of a document into ChatGPT—works for a single file here and there. But it simply doesn't scale. A dedicated legal AI platform is built to solve this exact problem.

Practical Example: A paralegal has 1,500 pages of medical records for a single complex case. Using the chunking method with ChatGPT would require over 150 individual copy-paste actions, followed by compiling all the outputs. A specialized platform could process the entire batch of documents at once, producing a unified medical chronology in a fraction of the time.

This kind of capability moves AI from a neat trick for a single task to a core part of your firm's operational infrastructure. It’s what allows you to handle more cases with far greater efficiency.

The more you use ChatGPT, the more you’ll notice its inconsistency. The quality of the output depends entirely on the user's skill at writing the perfect prompt. A senior paralegal might craft a prompt that produces a brilliant summary, while a less-experienced user gets a vague or inaccurate result.

This creates a quality control nightmare. You end up spending more time reviewing and fixing the AI’s work than you save in the first place.

Legal AI platforms solve this by using pre-built, optimized workflows for specific tasks like creating medical chronologies or drafting demand letters. The prompts are already engineered for legal accuracy.

What does this mean for your team?

When the time you spend editing AI drafts starts canceling out the efficiency gains, that’s a clear signal your firm needs a more reliable solution. A dedicated platform provides the dependable, high-quality results that chatgpt for lawyers promises but can't always deliver at scale.

As AI becomes more common in legal practice, a lot of practical questions come up. This quick-reference guide tackles the most common concerns we hear from lawyers and paralegals, reinforcing the key principles for using AI safely and effectively in your personal injury workflows.

Yes, but with some serious guardrails. The ethical use of ChatGPT for lawyers all comes down to treating it as an assistant, not as a substitute for your own professional judgment. At the end of the day, you are 100% responsible for the final work product.

To use it ethically, a few actions are non-negotiable:

Bar associations are still catching up with specific guidance, but the core principle is timeless: the attorney is always accountable. For example, The Florida Bar's Standing Committee on Professional Ethics issued guidance in early 2024 stating that lawyers may use generative AI but must protect client confidentiality and verify the accuracy of all AI-generated content.

ChatGPT is a language model, not a legal mind. It's incredibly good at processing text, extracting facts, and summarizing dense documents, which makes it a powerful tool for getting a first draft of a medical chronology off the ground.

However, it has zero ability to grasp legal nuance, understand jurisdiction-specific rules, or provide actual legal advice. For anything requiring deep legal reasoning—like interpreting an ambiguous contract or crafting a novel legal argument—it's simply not reliable on its own. It should always be used under the supervision of a qualified legal professional.

With the public version of ChatGPT, you can't. The only way to engage with general, public AI tools is to enforce a strict data anonymization protocol. All sensitive details must be swapped out for generic placeholders like 'Plaintiff' or 'Date of Incident.'

Never input personally identifiable information (PII), protected health information (PHI), or any confidential case details into a public AI model. Doing so creates an unacceptable risk of a data breach and a potential ethics violation.

For absolute security, especially when you're dealing with sensitive medical records in PI cases, the only truly safe option is a closed-system, HIPAA-compliant AI platform built specifically for the legal field. This completely removes the risk of your firm's data being used to train a public model or getting exposed in a breach.

Ready to move beyond the limits of general AI and secure your firm's workflows? ProPlaintiff.ai offers a HIPAA-compliant, purpose-built AI operating system designed to handle the specific demands of personal injury law, from demand letters to medical summaries, with the security and reliability you need. Explore the platform at https://www.proplaintiff.ai.